“Tokens. Give them to me now. I need them fast, I need them cheap, and I can’t wait.”

That’s the repeated rallying cry from developers building software on top of generative AI models — or at least that’s what Parasail CEO Mike Henry hears every day. Parasail builds cloud computing infrastructure specifically for AI inference, and Henry told TechCrunch the platform already generates 500 billion tokens per day for customers. If that’s not peak tokenmaxxing, nothing is.

Henry previously held an executive role at Groq, the LLM-focused semiconductor designer, where he built the company’s cloud product line. That work gave him early insight that developers building AI-native software would need specialized cloud processing tailored to their unique workload needs. One year after exiting stealth mode, Parasail has now closed a $32 million Series A funding round to scale this inference-focused offering globally.

While Henry has a background in physical chip design, Parasail has no plans to exclusively own and operate its own silicon. Though the company does own a portion of its GPU hardware, its core strategy relies on renting compute capacity from 40 data centers across 15 countries worldwide, plus sourcing additional excess capacity from public liquidity markets. Parasail orchestrates all this distributed infrastructure behind the scenes to drive down costs for end-customers’ inference requests.

By intelligently allocating workloads across its network and avoiding peak demand congestion, the startup aims to outcompete firms that own their own silicon, which are often constrained by pre-existing customer commitments and fixed workload allocations.

Parasail’s long-term growth opportunity hinges on the ongoing proliferation of open-source AI models and autonomous agents being built outside of the top frontier AI labs. Company leaders and investors agree this shift is being fueled by the rising costs and growing friction of relying on closed inference offerings from incumbents like Anthropic and OpenAI.

Andreas Stuhlmüller, CEO of Elicit — a startup that raised a $22 million Series A to build an AI research assistant for scientific literature — says a hybrid AI architecture is quickly becoming the new industry standard. His enterprise clients at top pharmaceutical companies use Elicit’s LLM-powered tool to review and analyze data pulled from tens of thousands of academic papers.

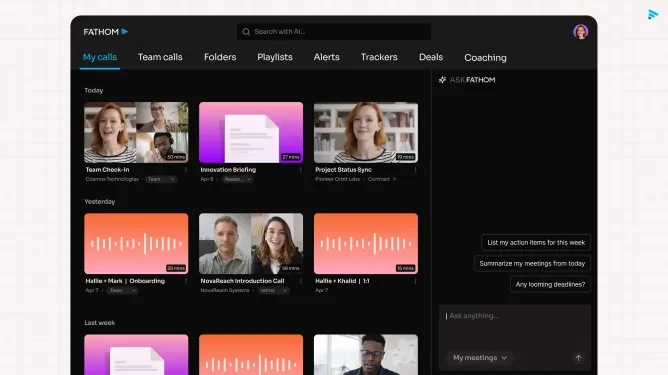

Meet your next investor or portfolio startup at Disrupt

Your next funding round. Your next key hire. Your next breakout opportunity. Find it all at TechCrunch Disrupt 2026, where 10,000+ founders, investors, and tech leaders gather for three days of 250+ tactical sessions, high-impact introductions, and market-defining innovation. Register now to save up to $410.

Meet your next investor or portfolio startup at Disrupt

Your next funding round. Your next key hire. Your next breakout opportunity. Find it all at TechCrunch Disrupt 2026, where 10,000+ founders, investors, and tech leaders gather for three days of 250+ tactical sessions, high-impact introductions, and market-defining innovation. Register now to save up to $410.

“We’ve shifted the bulk of our workload to open models because pushing hundreds of thousands of requests to third-party API endpoints creates far too much friction,” Stuhlmüller told TechCrunch. That challenge has grown as Elicit leans more on AI agents to improve its product, which break down complex tasks and operate over longer processing windows to deliver better outcomes. Open models handle low-cost initial screening work, before a more powerful frontier model generates the final polished output.

As AI agents become a standard component of modern software development, the total volume of model queries is skyrocketing — and that surge is driving major investment in infrastructure startups like Parasail that specialize in low-cost inference. Samir Kumar, a partner at Touring Capital which co-led the new Series A round, told TechCrunch he expects inference will account for at least 20% of total software development costs in the coming years.

How much of this fast-expanding market can Parasail capture? In the crowded cloud compute space, Henry argues his firm’s laser focus on inference (it does not support model training workloads at all) and willingness to work with startup customers without forcing long-term binding contracts sets it apart. That differentiates Parasail from both large cloud providers that prioritize big enterprise clients, and even better-funded cloud inference competitors like Fireworks AI and Baseten.

Of course, there is a unique risk to this approach: nearly all of Parasail’s customer base is made up of early-stage seed and Series B AI startups, operating in one of the most unpredictable sectors in tech today.

Steve Jang, a partner at Kindred Ventures (the other co-lead of the funding round), says the long-term economics of AI model deployment will always demand the kind of distributed compute brokerage Parasail has built. That need will only grow as AI sees widespread adoption for high-volume use cases like content generation and robotics.

“Everyone keeps talking about an AI bubble, but there is no AI bubble,” he told TechCrunch. “Demand for inference capacity is already far outstripping supply.”

Parasail raises 32m to feed tokenmaxxing ai developers